I am regularly stunned by what ChatGPT-4 can do. As I wrote this article, I asked it questions about the accuracy of what I was saying. It would give me excellent feedback, in its typical genteel manner, explaining what I got right and what needed tweaking. Then, when I finished a draft, I ran it by ChatGPT, and again it gave me helpful feedback.

This astonishes me. It’s one thing to generate text based on a simple prompt, and another to be able to assimilate what I’ve written and then advise me how to improve it.

I’m in good company: the developers themselves are often surprised at what Large Language Models can do.

Take, for example, ChatGPT’s ability to translate between languages. The lead developer of ChatGPT was himself surprised when it was first discovered that their new model was capable of translation. It wasn’t trained on paired translations (such as the same content in French and English), as was the case for Google Translate. Instead, ChatGPT was simply fed billions of words of text. And somehow it figured out how to translate.

How could it do this?

The Large Language Model (LLM) on which ChatGPT is based learns underlying patterns from the text data that it’s fed. What are these patterns? Their exact nature is complex and not fully understood, because what goes on under the hood entails such complex statistical analysis.

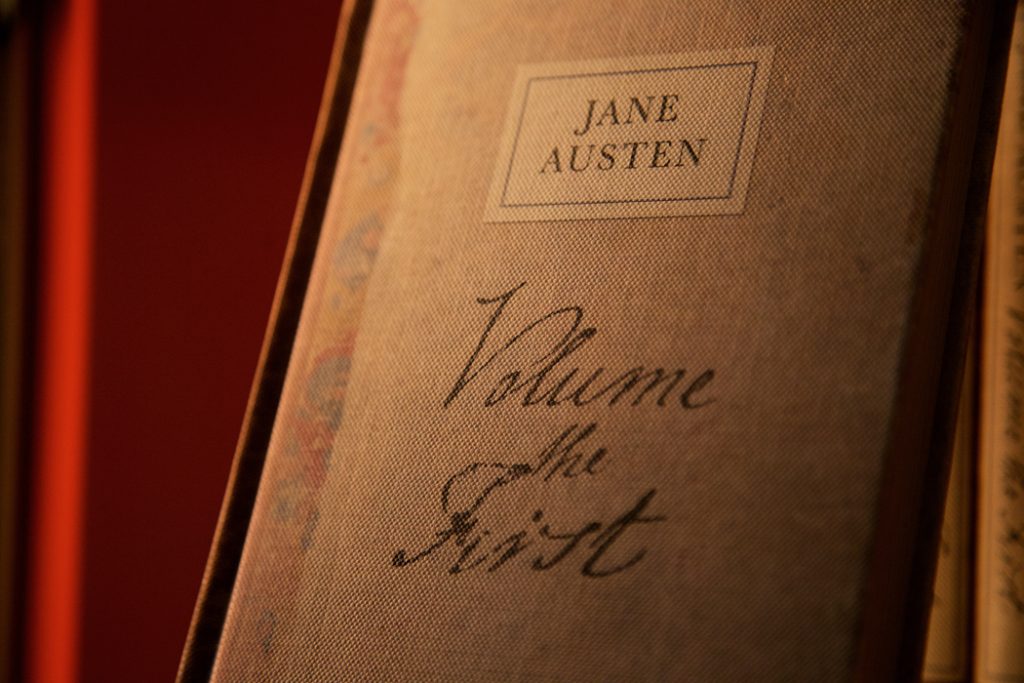

These models learn by making predictions, and then comparing these predictions to the data that the model has been fed. To illustrate how the process works, a simple LLM called BabyGPT that runs on a laptop was created and fed the works of Jane Austen. Being simple, it was easier to see it at work. A New York Times writer fed it the prompt, “‘You must decide for yourself,’ said Elizabeth.”

Using its neural net technology, BabyGPT started making predictions about the patterns in the data. Its first prediction was “?grThbE22]i10anZOj1A2u ‘T-t’wMO ZeVsa.fOJC1hpndrsR 6?.” It then compared that with the data it was fed and updated its relative weights based on the error between its predictions and the actual values.

As it made more predictions and compared them with the data, the relative weights were adjusted so its future predictions would be more accurate. After 500 rounds, it looked like this: “gra buseriteand the utharth satouchanders nd shadeto the se owrerer.”

As it learned the underlying patterns in the data, the result was starting to look more like words. After 5,000 rounds and 10 minutes, it looked like this: “rather repeated an unhappy confirmed, ‘as now it is a few eyes.’”

It doesn’t make sense, but now it’s producing actual words. This is how LLMs work. Basically, they are computer software programs that are teaching themselves the underlying patterns in language. They learn from data without explicit rules being provided by humans. They adjust their internal parameters or weights to recognize and reproduce patterns in the data they’re trained on. These underlying patterns allow ChatGPT to predict what words or parts of words come next when you give it a prompt.

And this is where the mystery comes in. Developers of LLM technology are unable to see these underlying patterns. LLMs have trained themselves, and in the process, they have developed capabilities that the developers weren’t expecting. The developers tend to find this both thrilling and a bit scary.

So why can’t the developers see what these patterns are? Neural networks can have millions or billions of parameters. These vast numbers make it hard to discern clear patterns or rules. Plus, the neurons in neural network software contribute to many small tasks rather than one neuron representing a single word. Also, these “hidden layers” within the neural network, which process and refine information, are abstract and may not actually align with concepts we humans can understand.

Bottom line: LLMs are seemingly intelligent, but in ways that so far remain a mystery.

Developers are eagerly trying to figure out ways to peer under the hood. One research scientist used Amazon reviews to create a manageable LLM and was able to look into the hidden layers. He found that the LLM had allocated a special neuron to the sentiment of the reviews. It figured this out without having any training in understanding sentiment.

Critics sometimes say that AI is simply summarizing text that it’s been fed. But that’s not accurate. Rather, it’s using what it learns based on statistical analysis and discovery of patterns to generate coherent sentences and paragraphs that often seem to go beyond its training data.

I’m fascinated. And amazed. Yet, personally, I like to think it’s not surprising. I like to think that there’s an underlying intelligence in all of existence, and that language, and life itself, is a process of manifesting this underlying intelligence. For me, it’s helping me appreciate this even more.

Find column archives at JimKarpen.com.